The skinny on what's happening in AI - straight from the previous live session:

Highlights:

🖼️ Flux 2: Almost as Good as Nano Banana Pro, 5x Cheaper

Black Forest Labs dropped Flux 2, an open source image generation model. It's nearly matching Nano Banana Pro's quality on benchmarks but costs about 3 cents per image versus 15 cents. If you're building apps that generate lots of images, that's a massive difference.

Kyle's take: I tested it live on stream. The London Christmas market with Day of the Dead theming came out pretty solid - Big Ben in every shot because how else would we know it’s London!

The big win here is that multiple variations generated quickly, which is brilliant for iteration. But when I tried an infographic about making matcha lattes, the text started breaking. "Pour pour milk into katcha" - not quite right.

Nano Banana Pro would nail that because it routes everything through a reasoning model first. It does the thinking, composition and text and then generates the image.

Flux 2 is fantastic for photos and general imagery, but for infographics, slides, or anything text-heavy, Nano Banana Pro is still the winner.

The real story here is that it's open source - you can deploy it yourself via GitHub or Hugging Face. Github Repo / HuggingFace.

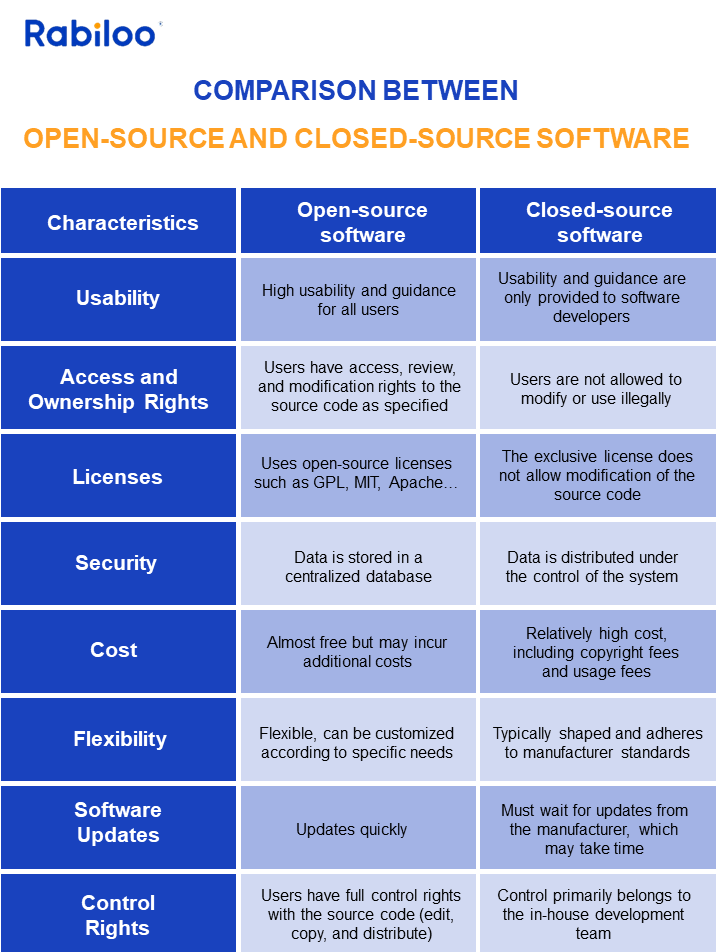

🔓 Open Source AI: Why It Actually Matters

Because yesterday was a relatively light news day I used Flux 2 as an excuse to properly explain open source! The Chinese labs - Deep Seek, Qwen - are going all-in on open source. The American labs - OpenAI, Anthropic, Google - are locking everything down. Meta was the exception with Llama, but Zuckerberg recently said they're stopping that.

Kyle's take: Using an open source model is very attractive if you are building a product. Lots of YC startups for example are using Chinese over US models. Not because they love China, but because open source lets you download the model, deploy it locally, fine-tune it, customise it.

With closed source, you're just renting access via API - zero control over the actual model.

What about security? If you're worried about data going to China, running a local Chinese model is actually safer than using ChatGPT. The model sits on your device. It's not connected to anything. You could run it on a computer with no internet. Whereas with OpenAI, every request goes to their servers. Bit ironic, that…

💻 How to Actually Run Local AI Models

If you do want to run a local model on your device how do you practically do that?

On iPhone, use Locally AI - it's free, doesn't need signup, just download and pick a model. For Android, there's something called SmolChat (2 stars on Play Store, not personally recommending it, but it exists!).

On Mac, use LM Studio - also free, dead simple interface.

All the models live on Hugging Face - you clone them, download them, run them.

The models won't be as good as Claude or ChatGPT, but they're private. Nothing leaves your device. Honestly it’s worth doing just to understand how this stuff works. I'd treat it as a fun side project rather than a production tool.

Now, you can also take an existing opensource model and fine-tune it. That’s when you adapt and train the model to your specific needs. That’s a different matter - something I’ll cover another time!

Kyle's take: Short answer: no. Long answer: also no. The UK produces brilliant researchers - people from DeepMind, Demis Hassabis types. Then American labs poach them because they pay a hundred times more.

DeepMind was bought by Google in 2014 for $400 million, which seemed insane at the time and now looks like one of the best acquisitions in history.

When I see EU announcements like "we're investing €100 million in AI" I genuinely think it's wasted money. You're behind, and you're underspending.

Elon's Colossus data centre has 50,000 H200 GPUs. One H200 costs ~30,000. That's $1.5 billion just in chips - and that's just one data centre from one company.

The table stakes just to play now are in the billions and hundreds of billions.

Europe's building something with a fraction of that, three years from now. Too slow, not enough, too late. This is a two-horse race between America and China. Everyone else is watching.

Member Question: "If you want to create an AI model or app that addresses a consumer issue, where should you start?"

Kyle's response: These are two very different things. Building your own AI model means training from scratch or fine-tuning something like Deep Seek - doable but expensive and (probably) not necessary.

Building an app that uses AI is much simpler: you connect to ChatGPT or Claude via API, send user requests, get responses back, pay a fraction of a penny per interaction.

That's it. If you have no coding experience, start with Lovable - it handles all the API stuff for you automatically. If you're more technical, use Cursor and set up API keys through platform.openai.com. For agents specifically, start with n8n to understand workflows visually before building from scratch.

Member Question: "Can you keep a version of GPT-4o? OpenAI want to retire it."

Kyle's response: Unfortunately not. OpenAI doesn't release their weights and biases - it's closed source. To keep a model locally, you'd need to download the actual parameters and deploy them yourself. OpenAI doesn't allow that.

They're deprecating the API on 16th February, right after Valentine's Day, which seems cruel given how many people have romantic relationships with 4o!

Your only option would be lobbying them to open source it rather than just deprecating it - they're essentially saying "we don't want this model anymore" so they could theoretically release it. But I wouldn't hold my breath.

Want the full unfiltered discussion? Join me tomorrow for the daily AI news live stream where we dig into the stories and you can ask questions directly.

Streaming on YouTube (with screen share) and TikTok (follow and turn on notifications for Live Notification).