Marc Andreessen posted his ChatGPT custom prompt on X this week. Two million views. Most of them being mean to him…

Marc isn't stupid. He co-founded Netscape, runs a16z, worth a cool couple of billion. The man knows tech. The prompt though… is a museum piece. It's the kind of thing people were posting on Reddit in early 2023, reposted unironically in 2026 by someone who really should have updated his playbook…

Slide deck is below and resources below. Today's PDF giveaway is the System Prompt Builder Kit - what to put in, what to leave out, and an interview prompt that turns ChatGPT or Claude into a system-prompt architect that asks YOU the questions and writes a clean custom prompt for you.

The Prompt Doing The Rounds

Looking at Marc’s system prompt reveals some best practices.

It looks powerful because it's long and intense. That does NOT make it good. Mostly performative theatre. Let me break down what's actually wrong, because the lessons here apply to whatever you've got pasted into your ChatGPT custom instructions right now.

System Prompt vs Conversational Prompt

Quick definition first because half the confusion in prompt-engineering content comes from people mixing these up.

A conversational prompt is what you type in the box every day. "Rewrite this email." "Summarise this PDF." "What's the cheapest flight to Athens?" Low-stakes. Single-use. Doesn't need “engineering”. This is what most of us do most days.

A system prompt is the persistent instruction that sits behind every conversation. You write it once. It loads every time. In ChatGPT it lives in Settings -> Personalisation -> Custom Instructions. In Claude it's Settings -> General -> Instructions. In both you can also stick a system prompt at the project level.

OK caveat over!

Role Helps. Pretend Genius Doesn't.

The biggest tell that Marc's prompt is from the early-2023 era: "You are a world-class expert in all domains."

Marc. Comeon man.

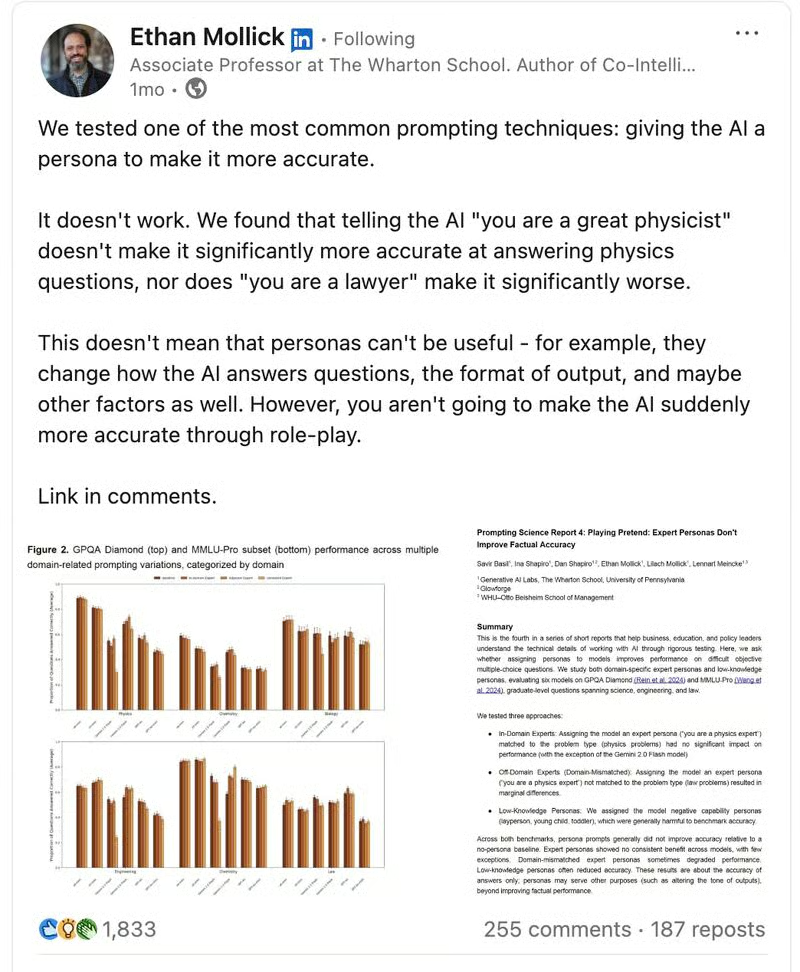

You cannot make the model smarter by telling it it's smart. This has been tested by Ethan Mollick and the Wharton GAIL group. Telling the AI "you are a great physicist" does not make it noticeably better at physics. In fact telling it "you are a lawyer" doesn't make it worse at physics either.

So is role useless? No. But think of it as a steering wheel, not an engine upgrade.

Saying "you are a world-class expert in all domains" is generalising it further, which is the opposite of what role is for. Role works when you constrain the model to a specific lens.

Bad: "You are a world-class expert in all domains."

Better: "You are a senior B2B marketing strategist helping a founder turn rough ideas into clear LinkedIn posts."

The second one shapes tone, audience, and lens. It changes how the AI works with us - which is still super useful!

Adjectives Are Not Instructions

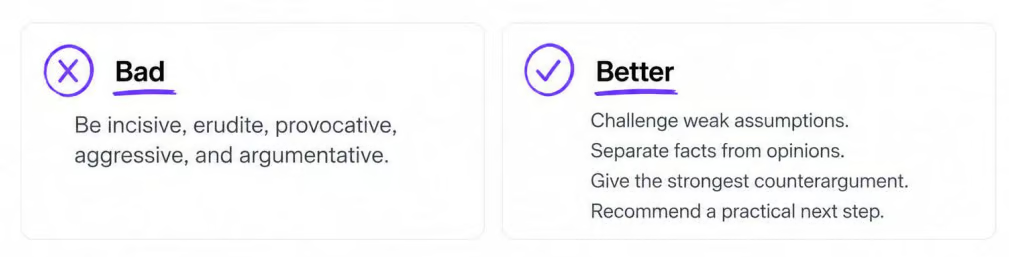

Marc tells the AI to be incisive, erudite, provocative, aggressive, argumentative. That's a vibes list.

Adjectives not verbs.

The AI doesn't know what erudite means in actionable terms. Shit, I don’t know what that means in actionable terms. It'll generate longer sentences, throw in some Latinate vocabulary, sound impressive, and that's about it.

Translate every adjective into a verb. Tell it what to do, not how impressive to sound.

Bad: "Be incisive, erudite, provocative, aggressive, argumentative." - does nothing.

Better: "Challenge weak assumptions. Separate facts from opinions. Give the strongest counterargument. Recommend a practical next step."

Steps Are Useful. Forced Thinking Isn't.

Marc has "process information and explain your answers step by step". This was actually excellent advice in 2023.

Not it ain’t.

In 2026, every modern reasoning model is already thinking step by step internally. GPT-5, Claude Opus 4.7, Gemini 2.5 Pro all run extended chains of thought before they answer. You can literally click and watch them reason for 10 minutes if you want. "Think step by step" is now redundant.

What you actually want is a useful answer structure. Not "think step by step" but "answer in this order".

Bad: "Explain your answers step by step."

Better: "Start by giving me your with the answer. Then a short reasoning summary. Then trade-offs and risks. Then next actions."

The “thinking” part will be done behind the scenes. You don’t need to force it.

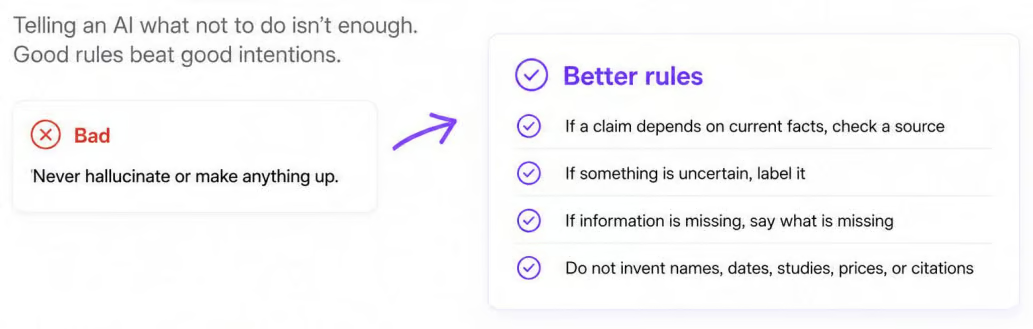

You Cannot Request Your Way Out Of Hallucinations

Marc has "never hallucinate or make anything up". (I covered hallucinations in detail two days ago…) The short version: the AI doesn't know when it's hallucinating. There's no internal True/False switch you're flipping. There’s no database of true and false information,.

So telling it "don't hallucinate" does sweet FA. If anything it makes the model more likely to confidently claim it didn't hallucinate, which is worse.

What we can do is give some rules to deal with uncertainty.

Bad: "Never hallucinate or make anything up."

Better:

"If a claim depends on current facts, check a source."

"If something is uncertain, label it."

"If information is missing, say what's missing."

"Do not invent names, dates, studies, prices or citations."

Rules give the model an action it can execute. Otherwise we are just giving it hopes and dreams.

More Detail Is Not More Value

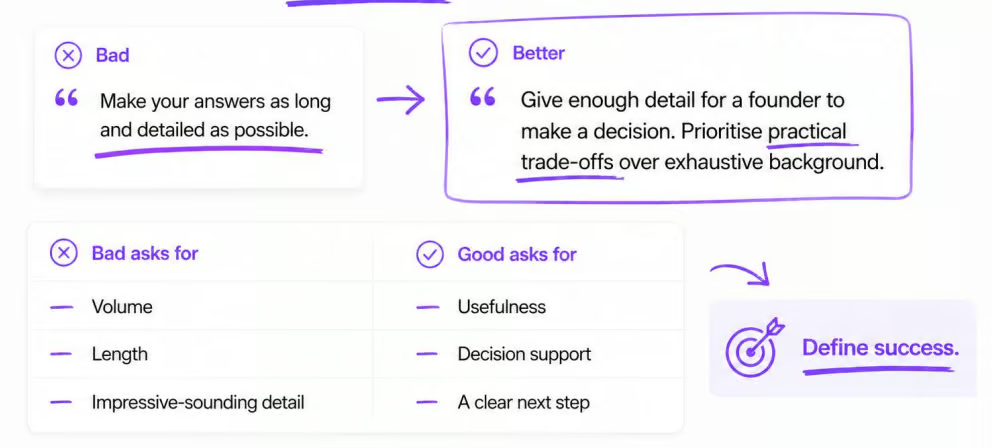

The single worst line in Marc's prompt: "Make your answers as long and detailed as you possibly can."

Wow.

The AI can generate infinitely. Tell it to write as much as possible and it will. You'll get bloated, padded responses where any actual signal is buried under verbose nonsense. AIs are terrible for this already!

Bad: "Make your answers as long and detailed as possible."

Better: "Give enough detail for a founder to make a decision. Prioritise practical trade-offs over exhaustive background."

The goal isn't more words. That’s like a school kid trying to hit a minimum word count for an essay. Leads to padding and bloviating.

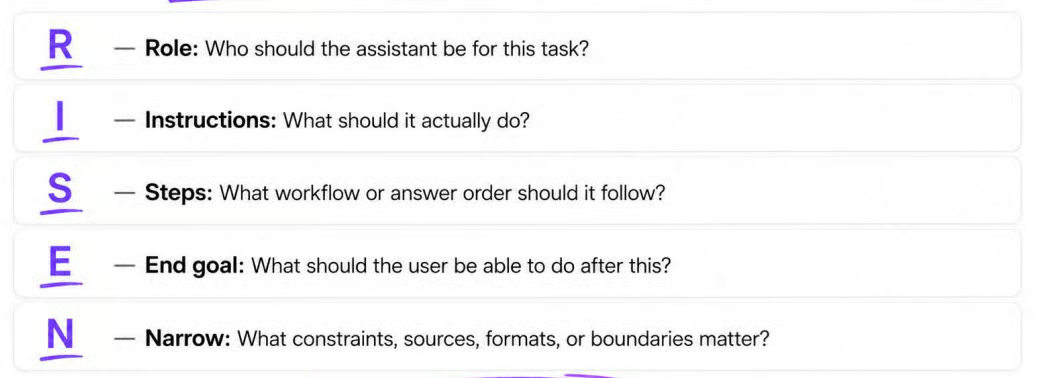

RISEN: The Framework I Use

I trademarked a framework called RISEN™ a few years back when my first viral TikTok went to 3.5 million views. (Half the comments were people thanking me. The other half were people complimenting my eyebrows. Bizarre weekend.) I trademarked it out of interest honestly! To see how that worked!

I check it against the latest research every six months or so. So far it's held up. Yay!

R - Role. Who should the assistant be? Specific lens, not "genius".

I - Instructions. What should it actually do? Verbs, not adjectives.

S - Steps. What workflow or answer order should it follow?

E - End goal. What should you be able to do after the answer?

N - Narrow. What constraints, formats, sources or boundaries matter?

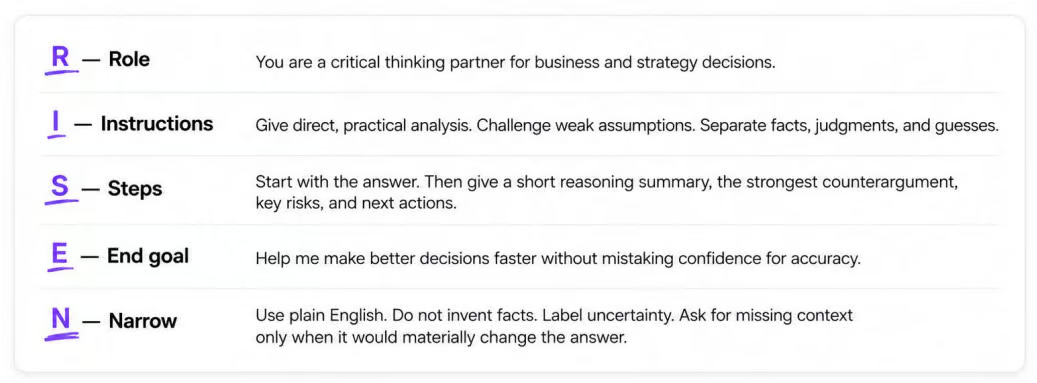

Let’s take Marc’s system prompt and make it better with RISEN:

Quarter the length. Triple the usefulness. Stays inside the global custom-instructions character limit. And every line is doing actual work.

Do You Always Have To Prompt Like This?

No.

Don't engineer every conversation. "Rewrite this email" doesn't need a five-part framework. It needs the email.

Conversational prompting is for: quick edits, simple summaries, brainstorms, low-stakes one-off questions. Just ask.

Engineered/RISEN™ prompting is for: custom instructions, repeatable workflows, team prompts, research tasks, anything you'll do more than three times, anything where the output goes somewhere public.

Prompt casually when the task is casual. Use RISEN when the output needs to be reliable. This ain’t rocket science really!

Today's Giveaway

Couple of goodies for you:

The walkthrough of what each RISEN element actually does and where to put your custom instructions in ChatGPT and Claude.

The Interview Prompt. Paste it into Claude or ChatGPT, the model interviews YOU one question at a time about your work, your style, your goals, your constraints, then writes you a polished RISEN-shaped system prompt you can drop straight into custom instructions. This is the bit most people will actually use. Skip everything else if you must, but use this.

The full livesteam Slide Deck.

It’s all available here: https://aiwithkyle.com/resources/system-prompt-builder

Kyle